The way we find information is changing, fast. Technical SEO for AI discovery is all about optimising your website’s core foundation so that AI systems like ChatGPT, Perplexity, and Google’s AI Overviews can easily find, understand, and trust it. It’s a move away from simply ranking for keywords. The new goal is to become a citable, authoritative source that AI models use to craft their answers to complex questions, preparing your site architecture for ChatGPT, Perplexity, and Google AI Mode. This puts a heavy emphasis on things like structured data, clean site architecture, and content clarity.

The New Reality of Conversational AI Search

Let's be honest, the days of just a search bar and ten blue links are numbered. People are now turning to conversational AI platforms, asking detailed, multi-part questions and getting back direct, synthesised answers. This is a massive shift in how users behave, moving from choppy keyword searches to natural, flowing dialogues.

For any business, this means the old SEO playbook just won’t cut it anymore. Your site's technical setup needs to cater not only to human visitors and traditional search crawlers but also to the large language models (LLMs) powering these new discovery engines. The game has changed from ranking for a keyword to becoming a trusted source the AI cites in its responses.

Why Your Current SEO Might Fall Short

While the fundamentals of traditional SEO still have a place, they don't quite cover what AI needs to see. Here’s why we need a new approach to prepare for AI-driven search engines:

- Context Over Keywords: AI understands natural language and user intent. It's on the hunt for deep, well-structured information that directly solves a problem, not just a page stuffed with a target keyword. Semantic keywords and entity recognition are paramount.

- Trust and Authority: AI models are built to pull information from sources they consider credible. Technical signals—like a logical site structure, comprehensive structured data, and fast performance—act as strong proxies for trustworthiness.

- Data, Not Just Content: AI consumes data, not just words. It needs your content presented in a machine-readable format to grasp the relationships between different concepts (or 'entities') on your site.

To really get a handle on how much the search world is changing, it's worth digging into the mechanics of navigating AI Mode Google and its impact on SEO. This shift demands a technical foundation that makes your website's knowledge easy for algorithms to digest and cite.

To illustrate this shift, let's look at a direct comparison.

Key Differences Between Traditional SEO and AI Discovery SEO

This table breaks down the fundamental changes in focus needed to get your site ready for AI-driven search.

| Focus Area | Traditional SEO Approach | AI Discovery SEO Approach |

|---|---|---|

| Content Goal | Rank for specific keywords and attract clicks. | Become a citable source for AI-generated answers. |

| Key Metric | Keyword rankings and organic traffic. | Citations in AI answers and entity recognition. |

| Optimisation Tactic | On-page keyword optimisation and link building. | Structured data, semantic HTML, and E-E-A-T signals. |

| Architecture | Flat or siloed structures based on keywords. | Topic clusters and interconnected entity networks. |

| Success Signal | A #1 ranking on a search engine results page. | Your site being the primary source for an AI overview. |

As you can see, while there's overlap, the mindset is entirely different. It's a move from winning a visibility contest to building a machine-readable knowledge base.

The objective is no longer just to be visible, but to be citable. Your technical SEO must make your content the most logical and reliable choice for an AI to use as a source.

This evolution is absolutely critical for capturing high-intent traffic. When a potential customer asks an AI a question about your industry, you want your website to be the source of truth that shapes the answer. Mastering the principles of technical SEO for AI discovery is how you make that happen, ensuring your brand stays visible and authoritative where it truly matters now.

Building an AI-Ready Foundation with Smart Crawlability and Architecture

Before an AI model can ever recognise your content as a credible source, it first has to find its way around your website. This is where a clean, logical site architecture comes in. It's no longer just a nice-to-have for user experience; it's a non-negotiable for technical SEO for AI discovery. This foundation is what allows AI crawlers to efficiently access, understand, and map out the expertise you have to offer.

I often tell clients to think of their website like a library. If the books are just thrown on shelves at random, with no catalogue or clear signage, the librarian (or an AI crawler) is going to have a nightmare finding anything specific. A well-planned architecture, with clear pathways, guides these crawlers straight to your most important and relevant content, making your site far easier for large language models (LLMs) to digest.

Crafting a Logical URL Structure

The very first place to create this clarity is in your URL structure. AI systems are built to parse information logically, and your URLs are one of the first and most powerful clues they get about a page's content and its position in your site's hierarchy.

Let's look at two real-world examples for a page on technical SEO services:

- Confusing:

anitech.au/index.php?id=8675309 - Clear:

anitech.au/services/seo/technical-seo/

The second URL instantly tells everyone—users and AI crawlers alike—what the page is about and how it fits into the rest of the site. It establishes a clear, crawlable path from the homepage right down to a specific service, reinforcing the topical relationships across your entire domain.

The Role of Internal Linking in Establishing Authority

Internal linking is the connective tissue that holds your website together. When done strategically, it does more than just help users find related content. It weaves a web of connections that demonstrates your topical depth and authority to AI models. It’s how you signal to an AI that you’ve built a comprehensive cluster of knowledge on a subject.

For example, a high-level pillar page on "Digital Marketing" should link out to more specific articles on topics like "SEO," "PPC," and "Content Marketing." Those articles should then link back to the main pillar page and, where it makes sense, to each other. This creates a powerful signal that you've built a well-organised knowledge hub. If you want to go deeper on this, exploring a blueprint for site navigation and information architecture is a great next step.

By creating tight thematic clusters with your internal links, you're essentially drawing a map for AI crawlers. You're highlighting your most important pages and spelling out the relationships between different pieces of content.

Optimising Your Crawl Budget for AI Agents

Every site has a crawl budget—the number of pages a crawler will visit within a certain timeframe. For larger websites, managing this budget is absolutely critical. You need to ensure AI crawlers are spending their limited time on your high-value, unique content, not getting stuck in a maze of outdated archives, duplicate pages, or messy faceted navigation.

Here are a few practical ways to direct AI crawlers where you want them to go:

- Use

robots.txtWisely: Block off sections that offer no value to an external crawler, like admin login pages, internal search results, or thank-you pages. This saves their resources for the content that truly matters. - Implement

noindexTags: Some pages have value for users but not for search (think internal policy documents or certain archives). Anoindexmeta tag keeps them out of the index but still allows crawlers to follow any links on them. - Tame Faceted Navigation: E-commerce sites are notorious for creating thousands of URL variations through product filters. You have to use canonical tags and

robots.txtrules to stop crawlers from indexing all those thin, near-duplicate pages.

A new standard is also emerging to give you more granular control over AI bots, much like robots.txt does for traditional search engines. It's worth looking into using an llms.txt generator to specify rules for different AI agents. Ultimately, a streamlined, crawlable architecture is the essential first step in making your website a trusted source for the next wave of search and discovery.

Helping AI Understand Your Content with Structured Data

AI models are incredibly powerful, but they don't think like humans. A person visiting your website can easily figure things out from context, layout, and visual cues. An AI, on the other hand, needs clear, direct instructions. It thrives on clarity, not guesswork.

This is exactly where structured data comes in. You might know it as Schema markup, and it's one of the most effective tools we have for technical SEO for AI discovery. Think of it as a translator that turns your human-friendly content into a machine-readable format that AI systems can process instantly.

By adding structured data, you’re basically putting labels on all the important information on your page. You're telling the AI, "This is a recipe," "this number is the price of the product," or "this person is the article's author." It removes the ambiguity, transforming your webpage from a jumble of text into an organised database of facts that AI can easily understand and use.

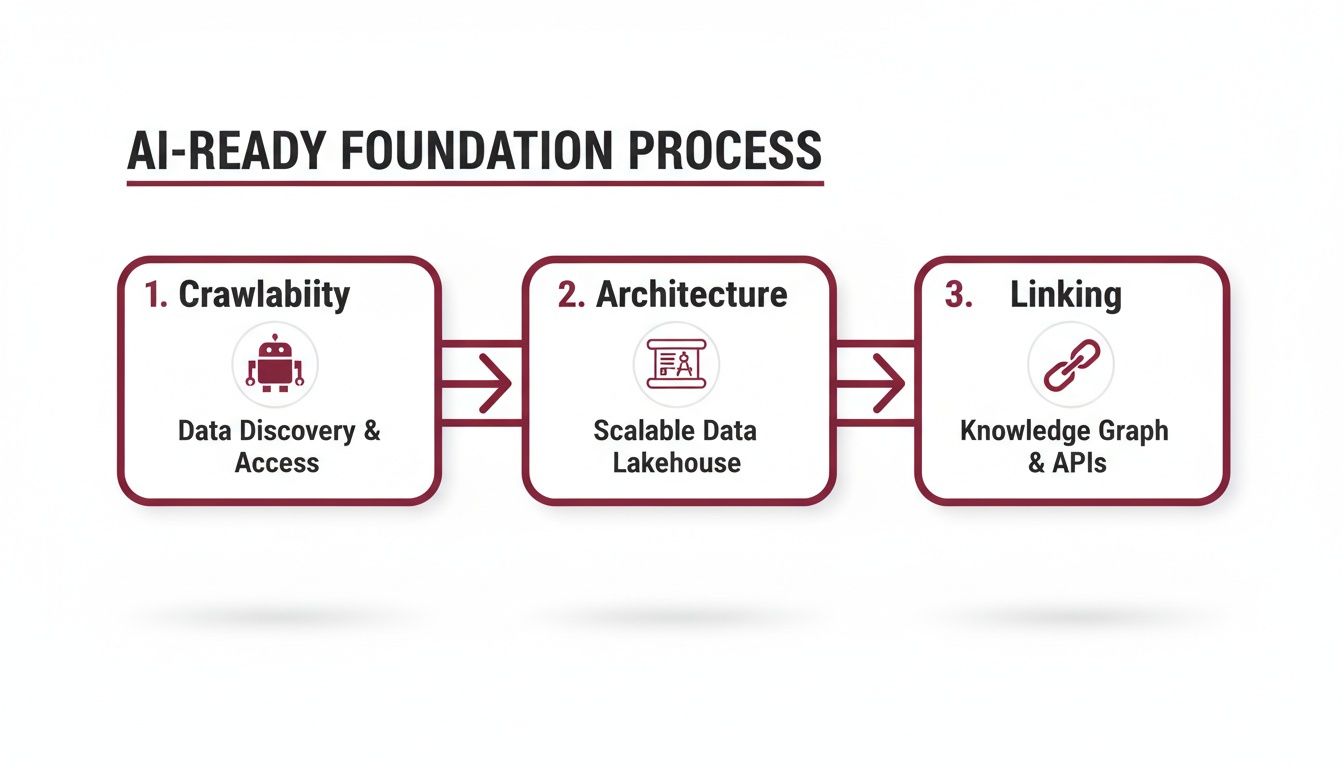

This whole process of building a clear, understandable foundation is what AI discovery is all about, and it rests on the core principles of crawlability, architecture, and internal linking.

As you can see, a technically solid website gives AI systems a clear path to crawl and interpret your content. This is the bedrock of effective AI discovery.

Why JSON-LD is the Way to Go

You have a few options for adding Schema markup to your site, but JSON-LD (JavaScript Object Notation for Linked Data) is the format Google recommends, and it's what most developers prefer. It’s a huge improvement on older methods that made you weave markup directly into your HTML.

With JSON-LD, you can place a single, self-contained block of code neatly in the <head> section of your page. This makes it far easier to manage, update, and troubleshoot your markup without ever touching your visible content. For an AI, it’s like being handed a perfectly organised dossier of information, which makes parsing your page’s content much more efficient.

The Most Important Schema Types for AI

The official Schema.org vocabulary has hundreds of types, but don't let that overwhelm you. You don't need to implement them all. The key is to focus on a core set that accurately describes your business and content. Getting these right will deliver the biggest impact.

Here are the non-negotiables to start with:

- Organization: This is your foundation. It defines your business as an entity, detailing your official name, logo, address, contact info, and social media profiles. It establishes exactly who you are.

- Product & Service: If you sell anything, these are essential. They let you specify details like price, availability, reviews, and service areas, giving AI precise data to use in commercial-intent answers.

- FAQPage: This markup is fantastic because it directly connects questions with their answers. Since a primary function of many AI models is to answer user questions,

FAQPageschema literally hands them the information on a silver platter. - Article or BlogPosting: For any site publishing content, this schema is a must. It identifies your work as an article and specifies the author, publication date, and headline, helping to signal expertise and timeliness.

Think of each Schema type as a detailed business card for a specific piece of information on your site. The more detailed and accurate your cards are, the better an AI will understand the full scope of what your business offers and knows.

What Organisation Schema Looks Like in Practice

Implementing schema might sound intimidating, but the code itself is surprisingly logical. Here’s a basic example of what Organization schema in JSON-LD format could look like for a fictional business called Anitech.

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Anitech",

"url": "https://anitech.au",

"logo": "https://anitech.au/logo.png",

"contactPoint": {

"@type": "ContactPoint",

"telephone": "+61-123-456-789",

"contactType": "customer service"

},

"sameAs": [

"https://www.facebook.com/AnitechAU",

"https://www.linkedin.com/company/AnitechAU"

]

}

This simple script clearly defines the company’s name, website, logo, and social profiles, creating an unambiguous identity for AI systems to reference. It's clean, simple, and incredibly effective.

For businesses here in Australia, the benefits of getting this right are massive. Technical SEO is crucial in our market for boosting AI discovery, and the data backs it up. Properly implemented structured data has been shown to increase click-through rates by a staggering 30%.

This is particularly true for our local eCommerce retailers, where 53.3% of all traffic comes from organic search. In such a competitive space, every technical advantage counts. Research on the state of SEO and marketing in Australia confirms that structured data isn't just a "nice-to-have" anymore; it's a measurable performance driver.

Optimising Site Performance and Rendering for AI Crawlers

Page speed and how your content renders aren't just user experience metrics anymore; they’ve become fundamental trust signals for AI discovery. When AI crawlers from systems like ChatGPT, Perplexity, and Google need to process colossal amounts of data, a slow or clunky website is a dead end. These systems favour sites that load quickly and present content without a hitch, treating performance as a direct proxy for quality and reliability.

Here, we'll get into the nitty-gritty of diagnosing and improving your site's performance so every piece of content is fully accessible to bots. We’ll cover Core Web Vitals, smart rendering strategies for complex sites, and practical tips to smash those bottlenecks. The aim is to create an experience that both users and AI systems will reward with greater visibility.

Mastering Core Web Vitals for AI Trust

Core Web Vitals are the specific metrics Google uses to judge a page's real-world user experience. While they were built for people, they’ve become powerful indicators for AI crawlers, signalling a well-maintained and dependable website. A poor score screams "technical issues," which could easily stop an AI from crawling and understanding your content properly.

Let's break down the three metrics that matter:

- Largest Contentful Paint (LCP): This is all about speed. It measures how long it takes for the biggest piece of content—like a hero image or a massive block of text—to appear. For a crawler, a fast LCP means it can get straight to the good stuff without waiting. You need to aim for an LCP of 2.5 seconds or less.

- Interaction to Next Paint (INP): The successor to First Input Delay (FID), INP measures a page's overall responsiveness to user interactions. A low INP signals a smooth, frustration-free experience, which sends a huge quality signal to any AI system.

- Cumulative Layout Shift (CLS): This metric tracks visual stability, measuring how much the page layout unexpectedly jumps around while loading. A low CLS score means the page is stable, preventing crawlers from getting a confused picture of your page structure as elements shift.

Getting these vitals in check usually comes down to the fundamentals: compressing images, using browser caching effectively, and cutting down on render-blocking resources. Tools like Google PageSpeed Insights and Lighthouse are your best friends for finding exactly what’s holding your site back.

Taming JavaScript with Smart Rendering Strategies

Modern websites, especially those built on frameworks like React or Angular, lean heavily on JavaScript to render content. This is great for creating rich, interactive user experiences, but it can be a massive roadblock for crawlers if you're not careful. An AI bot might just see a blank page if it doesn't wait for all your scripts to execute, meaning it misses your content completely.

This is where your rendering strategy becomes a true game-changer for technical SEO for AI discovery.

Choosing the right rendering method is one of the most impactful technical decisions you can make. It directly determines whether AI crawlers can see your content as clearly as a human visitor does.

Here are the main options on the table:

- Server-Side Rendering (SSR): With SSR, the server generates the full HTML of a page before sending it to the browser. This is the gold standard for AI crawlers because they get a complete, content-rich document right away—no JavaScript execution required. For most content-heavy sites, SSR is the clear winner for maximum crawlability.

- Static Site Generation (SSG): SSG takes it a step further by pre-building every page into static HTML files when you deploy your site. This delivers blistering performance and flawless crawlability, making it a fantastic choice for sites where content doesn’t change on a minute-by-minute basis, like blogs or marketing websites.

- Dynamic Rendering: This is a clever hybrid approach. It detects who's visiting—human or bot—and serves a pre-rendered, static HTML version to crawlers while delivering the feature-rich, client-side version to real users. It’s a solid workaround for complex web apps where a full SSR rebuild just isn't practical.

Think about an Australian e-commerce business running a complex storefront on React. Switching from client-side rendering to SSR could be the difference between an AI system seeing every product detail perfectly or seeing absolutely nothing. Making your content available in that initial HTML payload is simply non-negotiable for AI visibility.

Gaining a Competitive Edge with Advanced Tactics

Once you’ve nailed the fundamentals of site architecture and performance, it’s time to shift gears. To really stand out in the age of AI discovery, you need to pull some more advanced levers. These are the tactics that fine-tune how your site handles complexity, communicates directly with AI systems, and builds a resilient framework for whatever comes next in search.

Think of it this way: we’ve built the solid foundation, and now we’re adding the high-performance features that give you a real advantage.

Get Noticed Faster with Indexing APIs

Waiting for crawlers to stumble across your new or updated content is a frustrating waiting game. This is especially true for time-sensitive stuff like breaking news, job listings, or event details.

This is where indexing APIs, like Google’s Indexing API, come in. They give you a direct hotline to search engines, letting you ping them the moment new content is ready for crawling.

Instead of passively hoping a bot swings by, you’re proactively pushing your URLs into the queue. This dramatically speeds up discovery and indexing, ensuring your most critical updates are seen by AI systems almost instantly. For a cutting-edge technical SEO for AI discovery strategy, this makes your site a far more reliable and timely source of information.

Taming Faceted Navigation for AI Clarity

If you run an e-commerce store or a large directory, you know that faceted navigation—all those filters for size, colour, brand, and so on—is essential for users. But for technical SEO, it can be a complete nightmare. Left unchecked, it can spawn thousands of near-duplicate URLs that dilute your authority and burn through your crawl budget.

Getting this right is all about preventing that chaos and showing AI crawlers a clean, organised product catalogue.

- Strategic

robots.txtDisallow: Start by blocking crawlers from accessing URLs created by filter combinations that don't add much unique value. For instance, you might let Google indexcolourfilters but block complex combinations likecolour-and-size. - Smart

rel="canonical"Use: For the filtered pages you do allow crawlers to see, make sure they have a canonical tag pointing back to the main category page. This consolidates all your link equity and clearly tells AI which page is the master version. - The

nofollowAttribute: Be surgical with thenofollowattribute. Apply it to links for specific filter options you don’t want crawlers to waste time on, preserving your crawl budget for pages that actually matter.

Clarifying Your Global Content with Hreflang and Canonicals

For any business with an international audience, clearly communicating your content variations to AI systems is non-negotiable. Using hreflang and canonical tags together is the key to preventing confusion and making sure the right language or regional page gets served to the right users.

Hreflang tags are your way of telling search engines, "Hey, I have similar content, but it's tailored for different languages or regions." This is how they understand the relationship between, say, your Australian English page (en-au) and your UK English page (en-gb).

When you pair hreflang with rel="canonical", you create a rock-solid, unambiguous set of instructions. Each regional version of a page should have hreflang tags pointing to all other versions, plus a self-referencing canonical tag pointing to itself. This setup leaves no room for interpretation and solidifies your site’s structure for a global AI audience.

Getting your international signals right is a huge trust signal. It shows AI models that you are a thoughtful, authoritative source for specific regions, and that’s a powerful way to build credibility.

Even as AI continues to evolve, the core principles of good technical SEO aren't going anywhere. In 2025, with 93% of Australian searches powered by Google, fundamentals like managing site migrations and optimising Core Web Vitals are still mission-critical. This is especially true for e-commerce sites, where one technical slip-up can lead to getting deprioritised in SERPs where featured snippets already claim 8.6% of clicks.

If you want to dig deeper into why these basics still hold so much weight, you can explore detailed insights on the importance of technical SEO. A truly future-proof strategy is about mastering today’s best practices while keeping one eye firmly on tomorrow’s AI-driven landscape.

Got Questions About Tech SEO for AI?

As we all get our heads around this shift to AI-driven search, a lot of the same practical questions keep popping up. When you're in the trenches, it’s the "how" and "why" that matter most. Here are some straight-up answers to the queries I hear most often.

How Does E-E-A-T Fit into This AI World?

The principles of Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) are more important now than ever before. But here's the catch: for an AI, these aren't just fuzzy concepts. They have to be translated into cold, hard technical and on-page signals.

An AI can't shake your hand or tour your office, so it’s looking for the digital proof you leave behind.

- Expertise isn't just about what you know; it's about showing it. This means detailed, well-organised content, but also clear author bios with credentials and schema that explicitly tags your authors as experts in their field.

- Authoritativeness gets a huge boost from a clean, logical site structure that screams "we own this topic." It's also reinforced the old-fashioned way: with quality citations and links from other respected names in your industry.

- Trustworthiness is often a gut feeling for humans, but for AI, it’s a checklist. Are you using HTTPS? Are your pages fast (Core Web Vitals)? Is your privacy policy easy to find? These technical details build a foundation of trust.

Think of it this way: every technical decision you make either adds to or subtracts from your site's E-E-A-T score in the eyes of an algorithm.

Are Sitemaps Still a Thing for AI Crawlers?

Absolutely, 100%. Don't let anyone tell you otherwise. While AI crawlers are getting smarter by the day, an XML sitemap is still one of the most reliable tools in your kit. It’s like handing a visitor a detailed, annotated map of your property instead of just hoping they find all the good stuff on their own.

Relying solely on internal links for discovery is a gamble, especially for large or complex sites. A sitemap gives the crawler a direct, prioritised to-do list, ensuring that pages buried deep in your architecture or with few internal links don't get missed. It’s a simple, foundational piece of tech SEO that pays dividends in crawl efficiency.

What's the Single Biggest Mistake People Are Making?

If I had to pick one, it’s this: treating structured data as an afterthought. It's stunning how many businesses still see Schema markup as a "nice-to-have" or something to get to "later." In the age of AI discovery, that’s a critical, costly error.

When you skip structured data, you're forcing AI models to guess what your content means. You’re leaving the interpretation of your business hours, your product prices, or the key takeaways from your articles completely up to an algorithm.

Neglecting Schema is like trying to explain a complex idea in a foreign country without a translator. You might get the point across eventually, but it's going to be slow, clumsy, and full of misunderstandings. Robust structured data is how you speak the native language of AI.

How Can I Tell if Any of This Is Actually Working?

This is the big one. Measuring the direct impact of technical SEO for AI discovery means we have to look beyond our beloved keyword ranking reports. While the toolsets are still playing catch-up, there are a few solid ways to gauge your progress.

- Look for citations: Start monitoring AI-generated answers for queries in your niche. Are AI Overviews, ChatGPT, or Perplexity citing your website as a source? This is the clearest sign you're on the right track.

- Check entity recognition: Use tools that analyse how well search engines "understand" the key entities on your site—the people, products, and concepts you talk about. When you see this recognition improve, you know your technical signals are getting clearer.

- Dig into your log files: For a more hands-on approach, analysing your server logs is invaluable. You can see how often AI-specific crawlers like

Google-Extendedare hitting your site and which pages they care about most.

By shifting your focus to these new signals, you get a much more accurate picture of your true visibility and authority in this new AI-powered search world.

At Anitech, we build the technical foundations that get your business not just seen, but cited and trusted by AI. Our data-first approach to SEO is designed to prepare your website for the future of discovery. See how we can help you dominate Google rankings and drive real growth by visiting us at https://anitech.au.